The problem with this approach is that the outlier can influence the curve fit so much that it is not much further from the fitted curve than the other points, so its residual will not be flagged as an outlier.

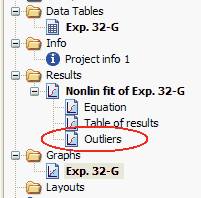

One option is to perform an outlier test on the entire set of residuals (distances of each point from the curve) of least-squares regression. Unfortunately, no outlier test based on replicates will be useful in the typical situation where each point is measured only once or several times.

If you have plenty of replicate points at each value of X, you could use such a test on each set of replicates to determine whether a value is a significant outlier from the rest. Several formal statistical tests have been devised to determine if a value is an outlier, reviewed in. With such an informal approach, it is impossible to be objective or consistent, or to document the process. Outlier elimination is often done in an ad hoc manner. Removing such outliers will improve the accuracy of the analyses. These points will dominate the calculations, and can lead to inaccurate results. But some outliers are the result of an experimental mistake, and so do not come from the same distribution as the other points. In this case, removing that point will reduce the accuracy of the results. Even when all scatter comes from a Gaussian distribution, sometimes a point will be far from the rest. Even a single outlier can dominate the sum-of-the-squares calculation, and lead to misleading results. However, experimental mistakes can lead to erroneous values – outliers. This assumption leads to the familiar goal of regression: to minimize the sum of the squares of the vertical or Y-value distances between the points and the curve. Nonlinear regression, like linear regression, assumes that the scatter of data around the ideal curve follows a Gaussian or normal distribution. Our method, which combines a new method of robust nonlinear regression with a new method of outlier identification, identifies outliers from nonlinear curve fits with reasonable power and few false positives. When analyzing data contaminated with one or several outliers, the ROUT method performs well at outlier identification, with an average False Discovery Rate less than 1%. When analyzing simulated data, where all scatter is Gaussian, our method detects (falsely) one or more outlier in only about 1–3% of experiments. Because the method combines robust regression and outlier removal, we call it the ROUT method. We then remove the outliers, and analyze the data using ordinary least-squares regression. To define outliers, we adapted the false discovery rate approach to handling multiple comparisons. We devised a new adaptive method that gradually becomes more robust as the method proceeds. We first fit the data using a robust form of nonlinear regression, based on the assumption that scatter follows a Lorentzian distribution. We describe a new method for identifying outliers when fitting data with nonlinear regression. However, we know of no practical method for routinely identifying outliers when fitting curves with nonlinear regression. Outliers can dominate the sum-of-the-squares calculation, and lead to misleading results.